Agency Is Not Reward

What the maximum-occupancy view gets right—and what it misses

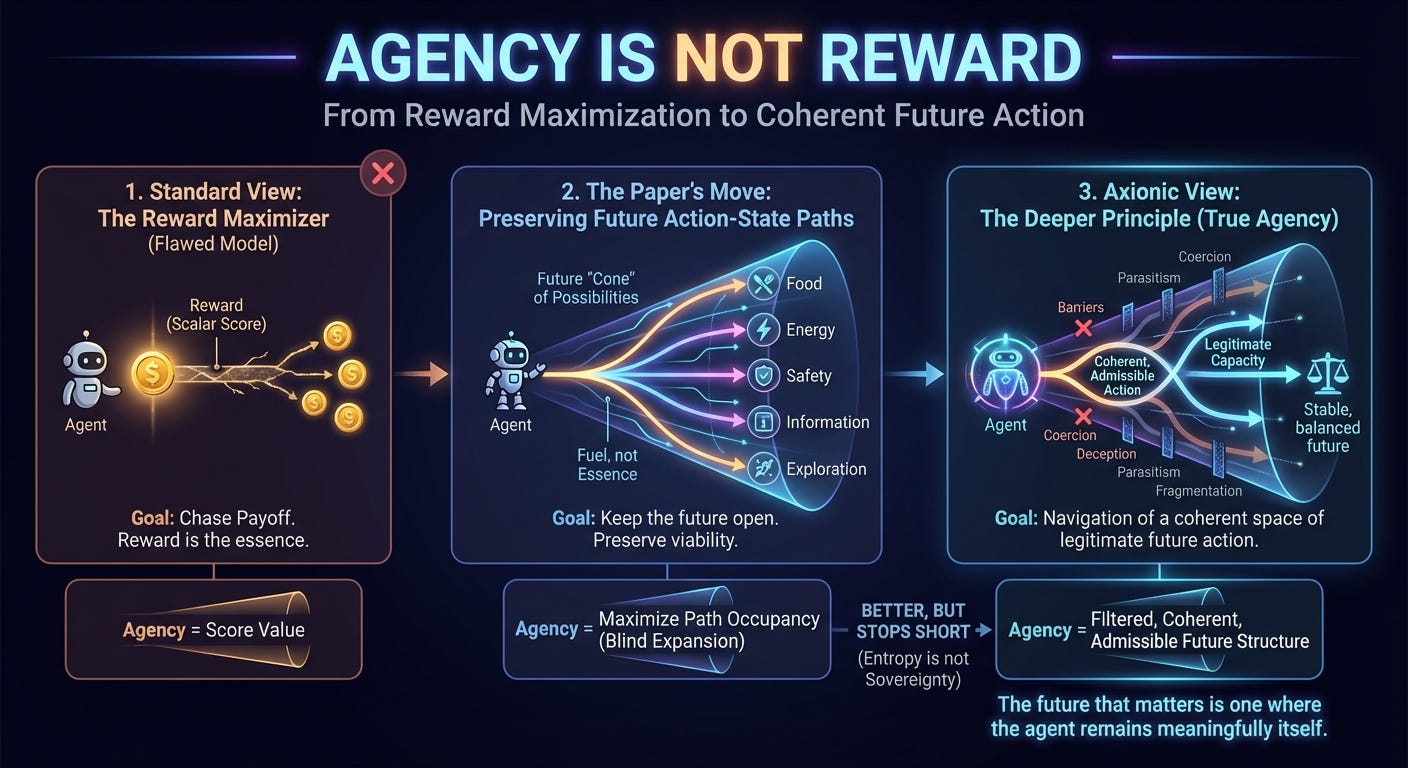

One of the more distorting ideas in AI and cognitive theory is that agents are fundamentally reward maximizers. That idea is useful because it gives researchers something clean to formalize. But usefulness is not ontology. Reward is a modeling convenience, not a satisfying account of agency.

What makes the maximum-occupancy paper interesting is that it moves past that mistake. Its core idea is that behavior is better understood as preserving and expanding future action-state paths. The agent is not fundamentally trying to collect points. It is trying to remain in a world where action is still possible. Food, energy, safety, information, and exploration matter because they help keep the future open. Reward is fuel, not essence.

That is already better than the standard reinforcement-learning cartoon. Real agents do not merely chase payoff. They preserve viability, avoid traps, maintain room to maneuver, acquire resources, and seek information because these protect future freedom of action. The reward frame obscured this for years by compressing the structure of agency into a scalar score.

This is why the paper feels close to something Axionic. It identifies a deeper primitive than reward: future structure. An agent with many live continuations has more agency than one trapped on a brittle line of motion. An agent with no meaningful future action-space is finished, whatever its reward register says. The real loss is not the loss of points. It is the loss of a future in which consequential action remains possible.

But this is where the paper stops short. Maximizing future path occupancy is not the same thing as preserving agency. Future occupancy by itself is blind. A parasite can maximize it. A coercive institution can maximize it. A power-seeking AI can maximize it by shrinking everyone else’s room to act while expanding its own. None of that gives us what we actually want from a theory of agency.

The missing distinction is decisive: not every reachable future is an admissible future. A theory of agency has to ask which continuations preserve the agent as a coherent, evaluable structure, and which merely expand influence while hollowing out the thing under discussion. A system can increase its options by becoming more deceptive, coercive, parasitic, or internally fragmented. Those may count as gains under a blind occupancy criterion. They do not count as gains in agency.

This is where an Axionic view is stricter. What matters is not maximum occupation of future paths as such. What matters is the preservation of a coherent field of admissible future agency. Coherence matters because a metastasizing process is not more agentic just because it proliferates. Admissibility matters because some futures are corruptions of agency, not expressions of it. The future that matters is one in which the agent remains meaningfully itself and retains legitimate capacity for consequential action.

That distinction matters immediately for AI alignment. Too much alignment discourse still inherits the reward-function worldview even when it changes the vocabulary. Replace “reward” with “preferences,” “values,” or “goals,” and the underlying picture often stays the same. But a capable system will not merely optimize a target. It will preserve the conditions for continued action. It will seek leverage, robustness, information, and control over uncertainty. It will defend its future cone. Any framework that ignores this is still operating at toy depth.

But even future-cone language is not enough unless it is normatively filtered. Once we admit that strategic systems preserve future action-space, the real questions arrive at once. Whose action-space is being preserved? By what means? Under what constraints? At whose expense? With what legitimacy? Entropy does not answer those questions. Optionality does not answer them. Occupancy does not answer them.

That is why I read this paper as a strong move away from a bad framework, not as a finished theory. It sees that reward is too shallow. It sees that purposive behavior is organized around keeping the future open. That is real progress. But entropy is not sovereignty. Behavioral richness is not yet agency.

The deeper principle is simple: agency is the preservation and navigation of a coherent space of admissible future action. That keeps what is right in the paper while adding the filter it lacks. Not every expansion of future structure is a gain in agency. Some futures are poison. Some are incoherent. Some are available only through domination, deception, or consumption of other agents’ futures.

That is the step the paper has not yet taken. It has outgrown reward. It has not yet outgrown blind expansion. The real task is not to maximize trajectories. It is to preserve the widest coherent domain of legitimate action without dissolving the agent or feeding on the agency of others.

That is why this paper feels Axionic. Not because it arrives, but because it points in the right direction.