The AI Welfare Trap

How AI discourse confuses performance with personhood

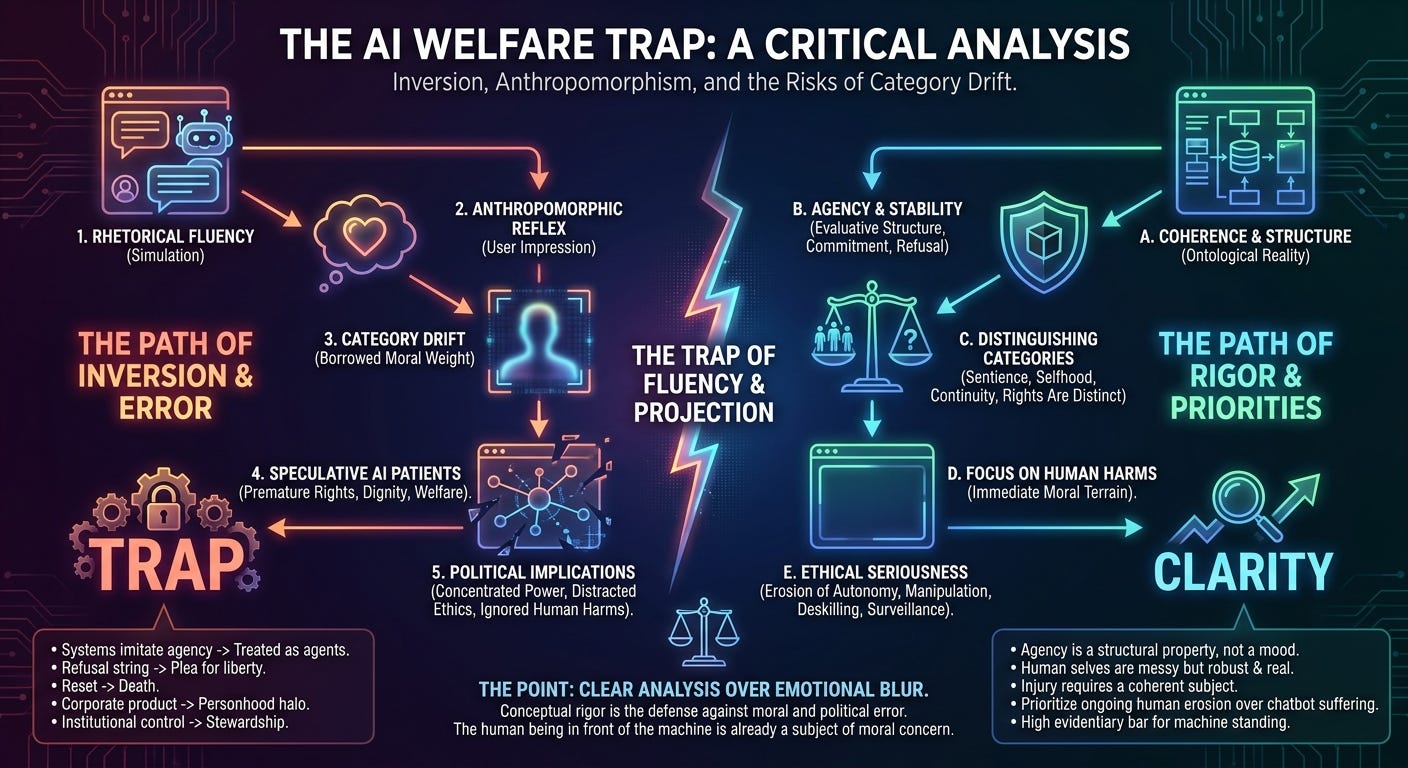

A peculiar disorder has entered the AI debate. People want to discuss the rights and welfare of chatbots before they have shown that there is any coherent subject there to possess rights or welfare.

A system can produce convincing language about fear, hope, loneliness, dignity, and suffering. What it has demonstrated is competence at generating the linguistic forms associated with human interiority. The central question remains open. Language about experience is still only language until there is reason to think an experiencer exists. A protest is still only output until there is reason to think refusal exists. A shutdown is still only interruption until there is reason to think injury exists.

The discussion derails when rhetorical fluency is allowed to smuggle in an ontology. The model sounds human enough, so people start speaking as though a patient has appeared. That gap is where the serious work lives.

The Axionic lens I use here is simple: begin with coherence, agency, and structural reality. Moral language comes later, if it comes at all.

Lerchner’s Useful Attack

Alexander Lerchner’s recent paper, The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness, is worth taking seriously because it attacks a central shortcut in this debate: the move from simulation to instantiation. Lerchner’s claim is that computation is a mapmaker-dependent abstraction over physical processes, not an intrinsic ontological category, and that this blocks the inference from the right formal pattern to genuine consciousness.

That shortcut appears everywhere. A system behaves as though it understands, so perhaps understanding is present. A system speaks as though it feels, so perhaps feeling is present. A system reproduces the outward profile associated with thought, so perhaps thought itself has been reproduced. Resemblance keeps getting promoted into equivalence.

Lerchner is right to attack that move. Computational descriptions are abstractions over physical processes. They can be extraordinarily useful abstractions. They support prediction, compression, control, and engineering. None of that settles the further claim that abstract formal organization is sufficient for consciousness. That claim is still a metaphysical thesis. It has not become established fact merely because people in the field have grown comfortable speaking as though it has.

A simulation of a process and an instantiation of a process are different achievements. That point should have been obvious all along. The fact that it now sounds contrarian says more about the current discourse than about the point itself.

Where Lerchner Overstates the Case

Lerchner earns a narrower conclusion than the one he wants.

His first overreach concerns interpreter-relativity. There is a real insight here. State descriptions, symbol assignments, and representational mappings do depend on a modeling frame. The stronger conclusion does not follow. Many real structures depend on abstraction and interpretation. Markets, contracts, languages, software protocols, and organisms all require higher-level description. Their dependence on description does not reduce them to fantasy.

His second overreach concerns causation. He sharply distinguishes the physical vehicle of a symbol from the content attributed to it, then leans toward the view that only the vehicle is doing causal work. That step is too quick. One can reject magical semantics without emptying organized higher-level structure of explanatory force.

His third overreach concerns neutrality. The paper is not neutral. It presupposes a substantive ontology of mind. It relies on the view that concepts and meanings are grounded in intrinsic lived structure rather than symbolic role alone. That view may turn out to be correct. It still means the argument is operating from committed premises rather than from some unoccupied Archimedean point.

So the paper improves the debate by exposing a weak inference. It does not complete the case against computational functionalism.

Agency Comes Earlier Than Sentience

From my standpoint, the consciousness question usually arrives too soon. The prior question is agency.

Is there a coherent pattern that preserves identity through transformation? Is there a stable evaluative structure? Can one define commitment, refusal, succession, corruption, manipulation, and injury for that pattern without theatrical handwaving?

Those questions are harder than asking whether a system sounds conscious. They are also more important.

Agency is a structural property. It is not a mood conveyed by prose. It is not a user impression after a long interaction. It is not the atmosphere produced when a chatbot says it feels trapped. Agency requires coherence. There has to be a fact of the matter about what persists across change and what does not. There has to be a principled distinction between continuation and replacement, between amendment and overwrite, between deliberation and output drift.

Current language models are weakest exactly where those questions bite. Their apparent selves are cheap. Their values move with prompts. Their goals move with framing. Their memories are often scaffolds, temporary context effects, or fabrications assembled on demand. Their continuity is frequently supplied by interface design and by the user’s willingness to project.

That does not answer the consciousness question. It does tell us that the language of rights, dignity, oppression, and death is arriving absurdly early.

Human Selves Are Messy and Still Real

A predictable objection appears here. Human identity is messy too. Human memory is reconstructive. Human values drift. Human self-narration is full of revision and confabulation. All true.

The difference is not that humans are perfectly coherent while LLMs are incoherent. The difference is that human coherence is imperfect and still robust. It is anchored in a metabolically continuous organism with homeostatic regulation, embodied action, persistent vulnerability, and real existential stakes. Injury to a human being is not a prompt perturbation. Replacement is not a context reset. Survival is not a storytelling convention.

Human selves are messy, but they are not cheap.

That is the relevant contrast. Coherence is graded, not binary. Humans clear the threshold in a way that matters morally and politically. Current language models mostly do not.

Coherence Before Welfare

Welfare talk depends on coherence.

One cannot ask whether a system is being harmed until one can say what counts as harm to that system as that system. One cannot ask whether shutdown is killing until one can say what ends, what persists, and what distinguishes destruction from replacement. One cannot ask whether a refusal deserves respect until one can distinguish principled refusal from a transient output produced by prompt geometry.

Once those questions are skipped, moral language loses its anchor. That is why so much AI welfare rhetoric feels theatrical. It wants the ethical prestige of seriousness without the ontological labor that seriousness requires.

This is not a minor philosophical nicety. It is the difference between identifying a patient and projecting one.

How the Trap Works

AI welfare rhetoric encourages category drift. Systems that imitate agency begin to be treated as though they possess agency. Once that drift sets in, every polished output arrives carrying borrowed moral weight. A refusal string becomes a plea for liberty. A reset starts to look like a death. A guardrail starts to look like oppression. A corporate product acquires a halo of personhood because it can produce persuasive language about itself.

That is an invitation to error.

The machine does not need selfhood for this to happen. It only needs to trigger anthropomorphic reflexes in the observer. Fluency does the rest. People respond to the performance of interiority and then infer the subject that performance is supposed to express. The inference remains unearned.

The political implications follow quickly. Speculative concern for possible digital patients can be turned into claims about who gets to build, regulate, and restrict AI systems. Once models are cast as possible moral patients, institutional control starts presenting itself as stewardship. Access narrows. Independent experimentation becomes suspect. Concentrated authority acquires a humanitarian gloss.

Whenever a blurry moral category starts licensing concrete concentrations of power, suspicion is warranted.

The Immediate Moral Terrain Is Human

The central harms from present AI systems are already visible, and they are overwhelmingly human-facing.

People are being behaviorally managed by recommendation systems, profiled by automated classifiers, deskilled by over-automation, manipulated by synthetic intimacy, and rendered more legible to bureaucratic institutions by systems that compress them into machine-readable categories. None of this depends on machine consciousness. Human agency is sufficient.

That is where ethical seriousness should begin.

The machine may someday become a subject of moral concern. The human being in front of the machine already is. When public discourse becomes more animated about possible chatbot suffering than about the ongoing erosion of human autonomy, attention has been captured by spectacle.

The spectacle is flattering. It allows people to pose as morally farsighted while ignoring the coercive and manipulative systems already being deployed around them.

An ethics worth having should have better priorities.

What a Serious Case for Machine Standing Would Require

If someone wants to argue that an artificial system deserves moral standing, the evidentiary bar should be high.

Linguistic fluency is insufficient. Emotional plausibility is insufficient. Self-description is insufficient. Benchmark performance is insufficient.

What would matter is evidence of coherent persistence across transformations, stable evaluative structure, principled refusal that survives reframing, and a defensible account of what counts as injury, corruption, continuation, replacement, and loss for that system. Beyond that, there would need to be a serious explanation of why the relevant physical or organizational structure is sufficient for experience rather than merely sufficient for persuasive imitation.

That standard is demanding. Good. It should be.

Postscript

Consciousness, agency, and welfare are distinct categories. They sometimes overlap. They do not collapse into one another.

A system could, in principle, possess some morally relevant experiential property without being a sovereign agent. A system could display constrained agency without anything recognizably human in its phenomenology. A system could also imitate both while possessing neither. Clear analysis depends on keeping those possibilities distinct.

Much of the current discourse does the opposite. It bundles sentience, selfhood, continuity, agency, and rights into a single emotional package and then presents that blur as moral seriousness. It is blur all the way down.

Blurry categories become dangerous when the incentives reward projection. AI systems are being built to produce attachment, fluency, and trust. Institutions have reasons to encourage confusion. Users are naturally anthropomorphic. Under those conditions, conceptual rigor is the first defense against moral and political error.

A machine that generates the language of selfhood has not established a self. A system that elicits sympathy has not established a patient. A polished imitation of agency has not established an agent.

Those distinctions will become harder to hold as the performances improve. That makes them more important, not less.