The Unit of Rational Choice

Newcomb’s paradox disappears once choice is evaluated at the level of policy

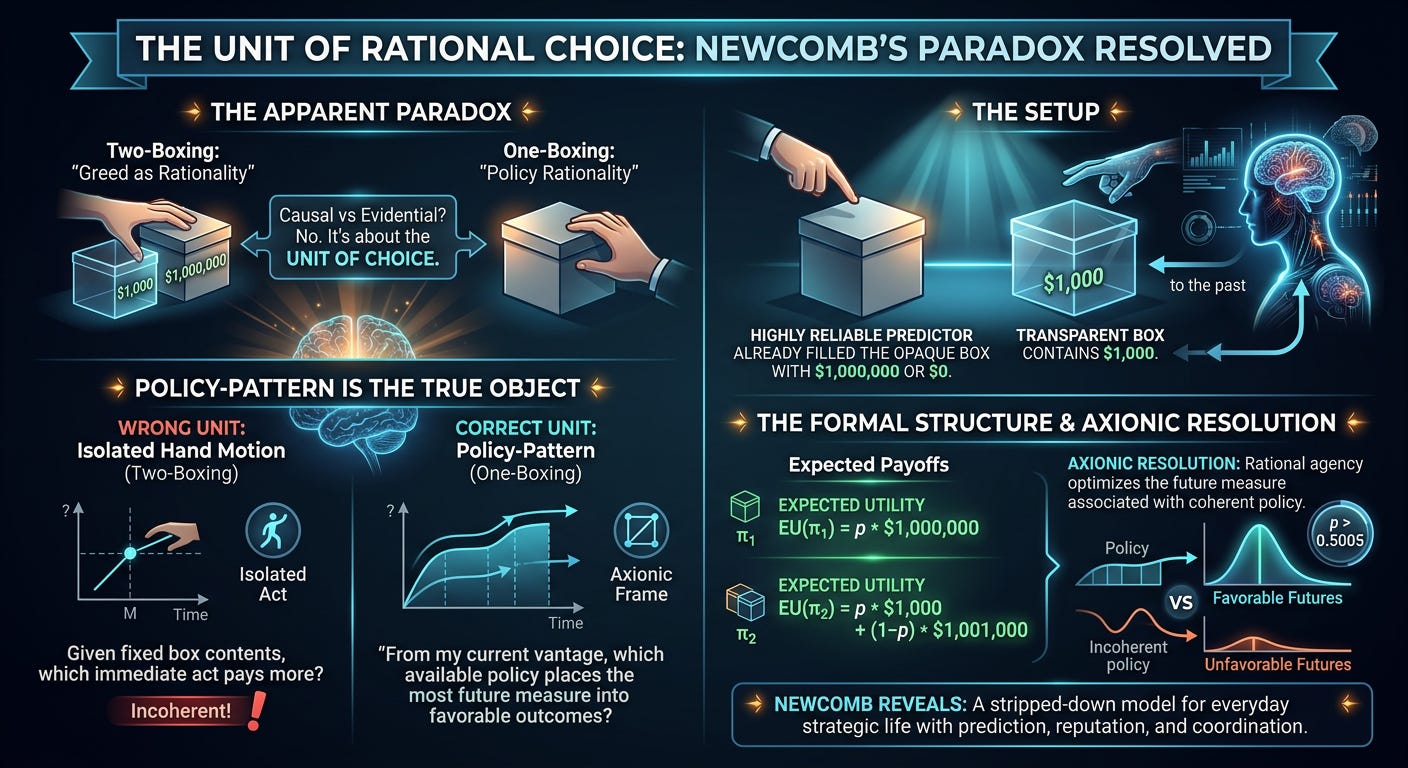

The Apparent Paradox

Two-boxing is what happens when local greed masquerades as rationality.

Newcomb’s paradox has a reputation for depth because it appears to split rationality against itself. One line of thought says you should take both boxes. Another says you should take only one. The spectacle is familiar: causal decision theorists on one side, evidentialists on the other, each claiming the mantle of reason.

That framing misses the real issue.

Newcomb’s paradox is not fundamentally about causation versus correlation. It is about the unit of choice. If you evaluate an isolated hand motion inside an artificially frozen world, two-boxing looks compelling. If you evaluate the policy-pattern that places you into a distribution of futures, one-boxing is obviously correct. The apparent paradox is created by sliding between those two levels without noticing.

The Setup

A highly reliable predictor has already filled an opaque box with either $1,000,000 or $0. If it predicted you would take only the opaque box, it filled it. If it predicted you would take both boxes, it left it empty. Beside it sits a transparent box containing $1,000. You may now take either the opaque box alone or both boxes.

The standard two-boxing argument says the money is already there. If the opaque box is full, taking both gives you an extra thousand. If it is empty, taking both still gives you an extra thousand. Therefore two-boxing dominates.

Where the Two-Boxing Argument Fails

This argument is invalid.

Its flaw is not that it respects causality. Its flaw is that it holds fixed a condition that the problem itself defines as policy-dependent. The contents of the opaque box are not caused by your present hand motion, but they are not independent of your decision in the only sense that matters. They are linked to the policy you instantiate. The predictor did not reward a last-second twitch. It rewarded being the kind of agent it predicted would one-box.

That is the entire structure of the problem. Once that is stated clearly, the paradox vanishes.

The wrong question is:

Given fixed box contents, which immediate act pays more?

The Correct Question

The right question is:

From my current vantage, which available policy places the most future measure into favorable outcomes?

That is the Axionic frame. Rational choice is not the optimization of an isolated motor output. It is the selection of a policy-pattern, evaluated by the measure-distribution of descendant futures associated with that policy.

The Formal Structure

Let

If the predictor’s reliability is p, then the relevant payoff distributions are:

So the effective expected utilities are:

One-boxing is better whenever

which simplifies to

So if the predictor is even slightly better than chance, one-boxing wins. At 99% reliability, the result is absurdly lopsided. One-boxing yields an expected payoff of $990,000. Two-boxing yields only $11,000.

No Backward Causation Required

Nothing supernatural is happening here. There is no backward causation. Your present choice does not reach into the past and alter the opaque box. The dependency is structural, not retrocausal. The predictor’s earlier action and your later decision covary because both are tracking the same underlying policy-pattern. The predictor succeeds by modeling you.

The Incoherent Counterfactual

This is exactly where the two-boxer goes wrong. He imagines that he can preserve the favorable consequences of being the kind of agent the predictor rewards, then swap in the local act of a different kind of agent at the last moment. He cannot. That is not a coherent counterfactual. It severs the very dependency the setup is built to expose.

The two-boxer wants the reward structure reserved for one kind of policy while endorsing another. He wants to inhabit the world in which the predictor filled the box, then act as though he were the sort of agent for whom the predictor would have left it empty. That is not cleverness. It is incoherence disguised as opportunism.

What Newcomb Actually Reveals

Newcomb therefore exposes something general about rational agency.

Choice is not best understood as an isolated act evaluated inside a fixed world. Choice is the instantiation of a policy that places an agent into a family of futures. Rationality tracks that larger structure. A policy-sensitive world rewards coherent policy, not locally greedy gestures.

That is why Newcomb matters. It is not a puzzle about a magic box. It is a stripped-down model of ordinary strategic life in any world containing prediction, reputation, commitment, signaling, or coordination. The same mistake behind two-boxing appears whenever someone tries to enjoy the benefits of being trusted, legible, or cooperative while defecting at the final moment and pretending the earlier structure can be held fixed.

It cannot.

The Axionic Resolution

Under an Axionic framing, the solution is simple:

Evaluate policies by the measure-distribution of descendant futures they induce from the current vantage.

Once that is done, Newcomb’s paradox is finished. One-boxing is correct because it places overwhelmingly more future measure into favorable outcomes. Two-boxing feels sharp only because it relies on an incoherent counterfactual and a stunted conception of agency.

Rational agency does not optimize isolated acts. It optimizes the future measure associated with coherent policy.