Why Zombies Don’t Evolve

Consciousness, attention control, and the Modeler-schema

Richard Dawkins asks the right evolutionary question: what is consciousness for? The question has force because consciousness is expensive. Brains consume enormous energy. They require long development, elaborate sensory integration, fragile sleep cycles, complex learning machinery, and costly social calibration. A theory that treats consciousness as an idle glow floating above cognition has already lost contact with biology, because evolution does not preserve elaborate ornaments across deep time when cheaper mechanisms solve the same job.

The zombie hypothesis tries to imagine a creature that behaves exactly like a conscious organism while lacking inner experience. It avoids danger, pursues food, builds dams, courts mates, learns from injury, navigates social life, plans, remembers, reports internal states, and revises its behavior under pressure. From the outside, it is behaviorally indistinguishable from us. Inside, allegedly, there is nothing. That is a useful metaphysical toy, but a terrible biological hypothesis, because a competent zombie would need almost every mechanism consciousness was introduced to explain. It would need attention, salience, pain-avoidance, self-monitoring, memory integration, conflict resolution, flexible planning, and some internal basis for comparing expected and actual states. At some point, the zombie has been granted the entire control architecture while the word “consciousness” has been withheld by stipulation.

The real question is architectural: what kind of control problem makes consciousness likely? The answer begins with attention, because attention is the biological solution to a fundamental constraint on all finite organisms: they cannot model everything.

Scarcity Forces Selection

Brains evolved under scarcity. An organism cannot process everything in its environment. It cannot attend to every sound, smell, retinal feature, bodily signal, memory, threat, opportunity, or possible future action. The world presents more structure than any finite creature can use. Survival depends on selection, because every organism must decide, moment by moment, which fragment of the world matters enough to guide action.

Attention is the biological answer to that scarcity. It determines what becomes relevant now. It amplifies some signals, suppresses others, binds perception to action, and prevents the organism from drowning in its own sensorium. Simple attention can be captured by the world: a flash, a crack, a sudden movement, a spike of pain. Advanced organisms need more than capture. They must hold a goal across distraction, override immediate impulses, shift focus under changing conditions, resolve conflicts between drives, and coordinate perception with imagined futures. That requires attention control.

Once attention must be controlled, the organism needs more than stimulus response. It needs a model of the world, a model of its own body, a model of what it is currently doing, and a regulatory process that can compare the current model against expected continuity. It must track what changed, what matters, what can be ignored, what should persist across sensory disruption, and what should redirect action. That is where consciousness becomes structurally expected.

MST Supplies the Mechanism

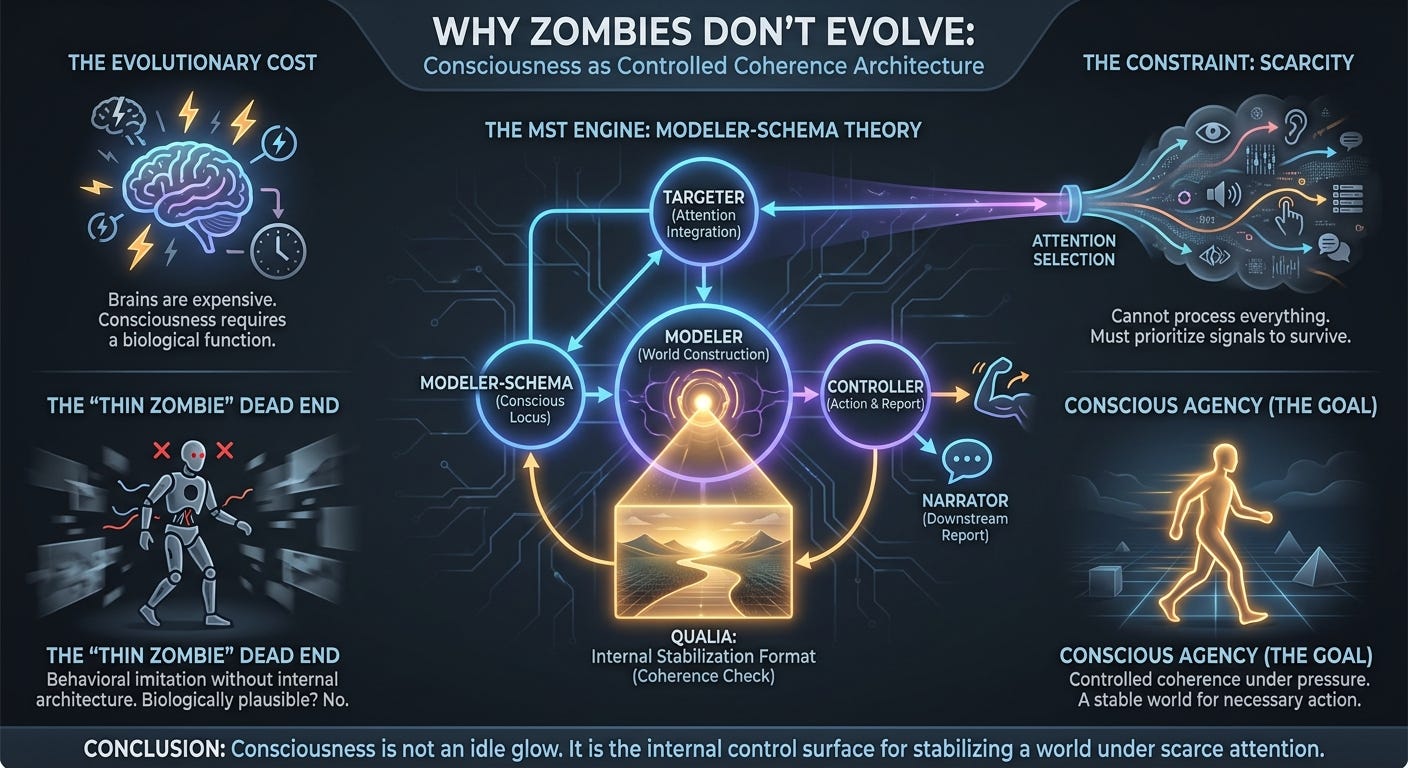

The Modeler-Schema Theory of consciousness proposes a precise architecture for this intuition. MST describes three functional roles. The Modeler constructs and updates the World Model. The Controller selects actions, uses language, and forms narratives. The Targeter integrates bottom-up and top-down attention requests. Each has a regulatory schema-agent. The conscious locus, in this theory, is the Modeler-schema, which generates qualia as an internal representational medium for coherence-checking the World Model.

This should be understood as an explanatory architecture rather than a claim about settled neuroscience. The point is to specify what kind of system would make consciousness biologically intelligible. MST may turn out to be incomplete or wrong in its details, but it has the right shape: it connects attention, world-modeling, self-regulation, and phenomenal availability into a single control architecture.

Attention selects what matters. The Modeler-schema explains why selected content becomes experienced. That distinction is crucial because attention alone gives priority, while consciousness requires phenomenal availability. Attention by itself does not explain why red looks red, why pain has urgency, why a sound appears as present, why a remembered scene has a different character from a perceived scene, or why the world remains continuous through discontinuous sensory sampling.

MST treats qualia as functional. They are the Modeler-schema’s internal comparison format: compressed, structured representations used to detect mismatch, preserve continuity, and refine the World Model across time. Qualia are calibration media. The cleanest example is vision. Humans move their eyes several times per second, and each saccade radically changes the retinal input. Yet the world does not jump, smear, or disintegrate with every eye movement. Experience remains stable because something preserves continuity across discontinuous sampling. MST identifies that something as the Modeler-schema’s qualia-based comparison process.

The point generalizes beyond vision. An organism must stabilize touch, proprioception, sound, threat, memory, social expectation, hunger, fatigue, pain, and imagined possibility into a usable world. It must distinguish noise from change, fantasy from perception, memory from immediate danger, background from target, and bodily disturbance from external object. Consciousness is the interior availability of that stabilization process.

The Hard Problem and the Demand for an Extra Bridge

This is where the Hard Problem enters. A critic can grant the entire functional story and still ask why any of this should feel like anything. A self-driving car can compare expected and actual sensor states. A thermostat can regulate temperature. A robot can preserve continuity across noisy input. Why should world-model stabilization require inner experience?

That objection has force against crude functionalism. If the claim is merely that information processing somehow produces feeling, the explanation is too thin. The mysterious term has been moved rather than explained. MST needs a stronger claim: experience is the internally available comparison format of a self-maintaining world-model under controlled attention. Feeling is not an extra glow emitted by the process. Feeling is what that comparison process is called from the system’s own vantage.

That is a philosophical wager. MST does not solve the Hard Problem on Chalmers’ terms. It rejects the assumption that function and experience are two different kinds of thing requiring a metaphysical bridge. On MST, the demand for a further bridge may be a category error produced by describing the same control architecture from two incompatible standpoints. From the outside, we describe representation, attention, mismatch detection, and model stabilization. From the inside, the system has red, pain, hunger, fear, memory, effort, salience, and presence.

The hard question then changes. Instead of asking how dead representation magically becomes experience, we should ask what kind of representational control architecture has an inside. MST’s answer is: a self-maintaining Modeler-schema using qualia as its internal comparison format for world-model coherence. That answer may be wrong, but it is at least the right kind of answer. It treats consciousness as an architectural fact about agents rather than a metaphysical vapor added to computation.

The Thin Zombie and the Thick Zombie

The zombie intuition survives by remaining thin. A thin zombie is an imaginary duplicate with consciousness deleted by stipulation. It behaves like us because the thought experiment says it does. It has no engineering burden, no metabolic constraints, and no architecture. It simply inherits our behavioral profile while the thing to be explained is declared absent. That may work as metaphysics by subtraction, but it does not survive as biology.

The thick zombie is different because a thick zombie must actually do the work. Give it embodied perception. Give it scarce attention. Give it goals. Give it risk. Give it interruption. Give it pain-like urgency. Give it memory integration. Give it world-model coherence. Give it self-monitoring. Give it recursive attention control. Give it an internal comparison format used to stabilize perception and action. At that point, the denial of consciousness starts to look verbal, because the machinery has been reconstructed under different names.

This is why competent zombies are unstable abstractions. A fixed routine can be unconscious. A narrow optimizer can be unconscious. A linguistic simulator may be unconscious. A fully flexible agent that maintains a coherent world under controlled attention has crossed the relevant architectural threshold. Calling it a zombie explains nothing; it only refuses the name of the process it has already described.

Consciousness Is Bound to Agency

This also explains why consciousness is so tightly bound to agency. An agent does more than emit outputs from inputs. It preserves itself through time, acts under uncertainty, resolves conflict among possible futures, and regulates its own modeling process. Agency requires a usable world. A usable world requires selective attention. Selective attention at sufficient depth requires coherence maintenance. Consciousness is the internal availability of that coherence maintenance to the organism’s control architecture.

The crucial step is recursion. Attention can be captured by the world, while controlled attention must be monitored by the organism. The system must track what it is attending to, why it matters, whether the current target still deserves priority, and whether the world model remains coherent as focus shifts. Once attention becomes available to the system as something it can regulate, experience starts looking like the interior face of world-model control.

This is also why consciousness comes in degrees and varieties. A simple organism may have primitive salience without rich subjective life. A mammal has deeper integration: pain, hunger, fear, attachment, spatial navigation, social inference, memory, and anticipation. A human adds language, abstraction, autobiographical continuity, moral imagination, and explicit self-modeling. The gradient tracks the depth of the coherence problem. Consciousness scales with world-modeling, attention control, and the sophistication of the Modeler-schema.

The Narrator Is Downstream

MST also clarifies a common confusion: the speaking self is downstream of conscious generation. The Controller reports, explains, speaks, rationalizes, and selects action. The Modeler-schema generates qualia. The narrator inherits the effects of experience without owning the machinery that generates it. That separation explains why introspection is compelling and unreliable. We feel as if the speaking self has direct access to experience, but in architectural terms, the speaking self receives interpreted outputs from deeper processes. It can report pain without understanding how pain is generated. It can explain perception while missing the machinery that stabilizes perception. It can confabulate motives after action selection has already begun.

This matters because many consciousness debates overprivilege report. They ask whether a system can say the right things about experience, but report belongs to the Controller. Experience belongs, on MST, to the Modeler-schema. A system can imitate the language of consciousness while lacking the architecture that makes consciousness functionally necessary.

LLMs and the Dawkins Worry

Large language models make Dawkins’ worry sharper. They can converse, summarize, translate, reason in limited contexts, imitate styles, pass exams, and produce fluent self-descriptions. They can discuss pain, vision, desire, fear, agency, and selfhood with eerie fluency. That shows something important: linguistic competence alone is weak evidence for consciousness.

A language model can produce reports about experience without maintaining a World Model under scarce attention, risk, temporal continuity, and self-regulating action. It can imitate the Controller while lacking the Modeler-schema. It can produce the public language of inner life without possessing the internal comparison format that MST identifies with experience. This does not settle the consciousness status of all artificial systems, but it does give us the right diagnostic target.

The relevant issue is control architecture rather than carbon chemistry. Biological embodiment matters because it creates binding constraints: risk, scarce attention, irreversible action, temporal continuity, self-maintenance, and pressure to stabilize a world for action. A digital system could in principle face analogous constraints. It could have scarce computational attention, persistent self-state, costly action, memory, interruption, environmental risk, goal conflict, and a need to preserve coherent agency over time. Such a system would deserve a different analysis from today’s chatbots.

The evidential bar is controlled world-maintenance under pressure: perception, interruption, risk, memory, goal conflict, bodily or functional salience, attention regulation, and action. A chatbot saying “I am conscious” tells us it can generate that sentence. A system maintaining a coherent world across costly action, under scarce attention and self-regulation, would be a much stronger candidate. The question is architectural. Does the system couple attention control, world-model stabilization, self-state tracking, and internal coherence comparison in the right way? Does it need something like a Modeler-schema to do its work? Does its control problem require an internal format equivalent to qualia? Behavioral fluency is cheap. Controlled coherence is the serious test.

Postscript

Dawkins is right that consciousness needs an evolutionary job. The job is controlled coherence. An organism must select what matters, stabilize a usable world, and correct the model as perception, memory, bodily state, and action continuously perturb it. In MST, qualia are the Modeler-schema’s calibration format. Consciousness is the organism’s internal access to world-model stabilization under controlled attention.

Natural selection selected organisms that could act through coherent world models under scarcity. Consciousness is the biological control surface produced by that requirement. It keeps the world stable enough to act in, the body salient enough to preserve, and the future present enough to plan against. Brains did not evolve consciousness because the universe needed spectators. Brains evolved consciousness because animals needed stable worlds in which to act.

The zombie dissolves at exactly this point. A creature with no inner experience could execute fixed routines and perhaps display impressive narrow competence. A fully flexible agent that controls attention, stabilizes perception, tracks itself, learns from suffering, compares expected and actual states, imagines futures, and coordinates action across time has crossed the relevant threshold. On MST, consciousness is what controlled attention becomes when a self-maintaining world-model requires an internally available comparison format for coherent action. That is what consciousness is for.